VSAN in vSphere 6 reminds me of Steve Austin, the Bionic Man:

“Gentlemen, we can rebuild him. We have the technology. We have the capability to make the world’s first bionic man. Steve Austin will be that man. Better than he was before. Better…stronger…faster.”

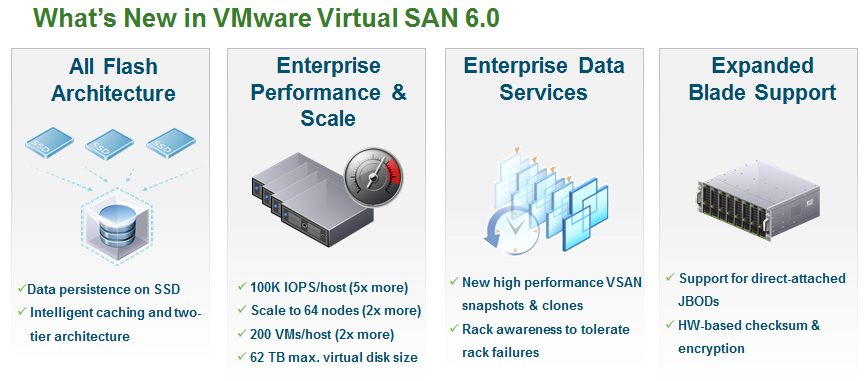

The version of VSAN goes from 1.0 in vSphere 5.5, to VSAN 6.0 which is in line with the new version of vSphere. I’m sure VMware did this to avoid confusion and ensure people didn’t think of it as a separate product from vSphere. This big jump from 1.0 to 6.0 is warranted though as there is a ton of new features and enhancements under the covers that greatly improve the usability, scalability and availability of VSAN and make it a truly enterprise worthy storage array. Here a summary of the big things that are new with VSAN in vSphere 6:

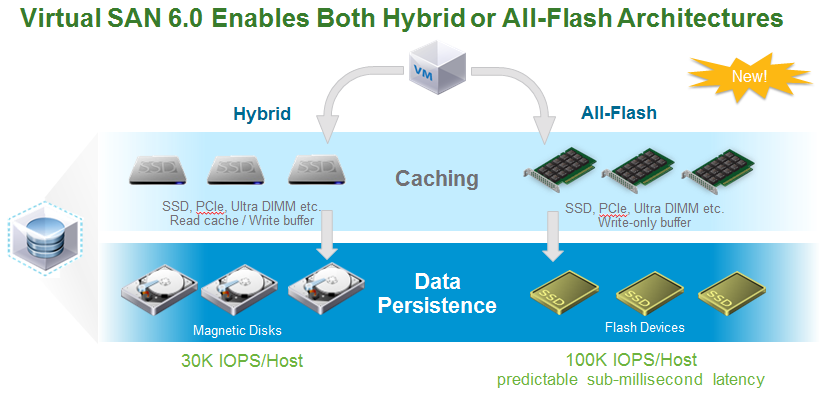

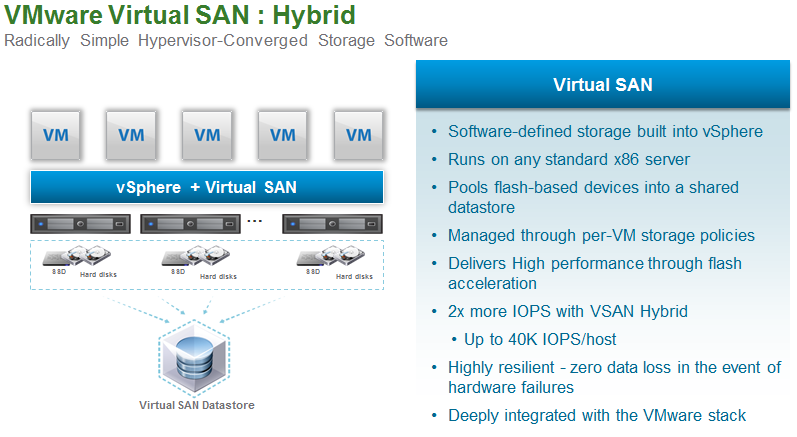

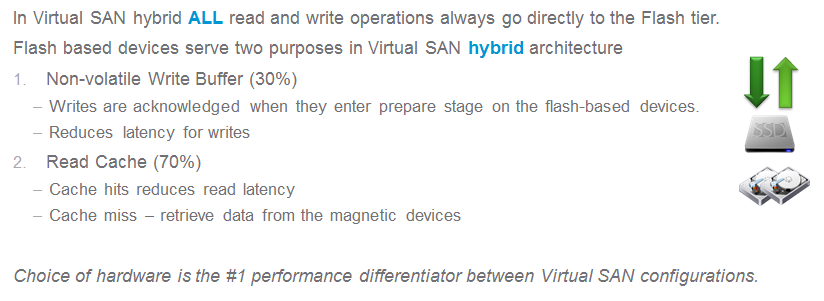

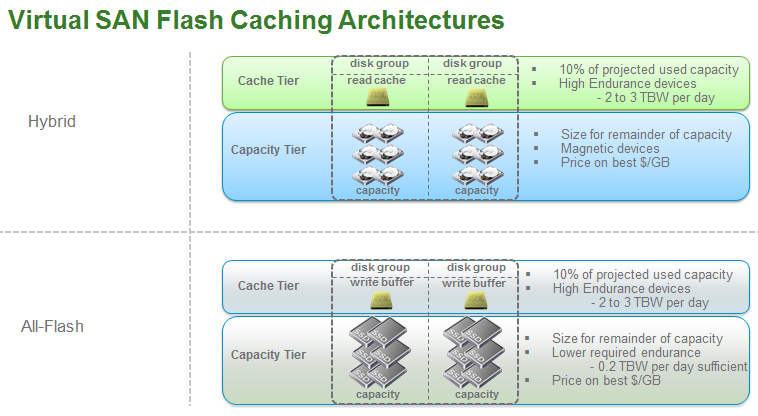

Two deployment modes, Hybrid or All-Flash

SSD’s are no longer just used for read caching and write buffering, they can now be used as primary storage as well in an All-Flash mode. The traditional model of magnetic hard disks and SSD’s is now referred to as Hybrid Mode. In Hybrid mode the SSDs function only as a read cache and write buffer as they did in vSphere 5.5, persistent data cannot be written to the SSD tier in Hybrid Mode.

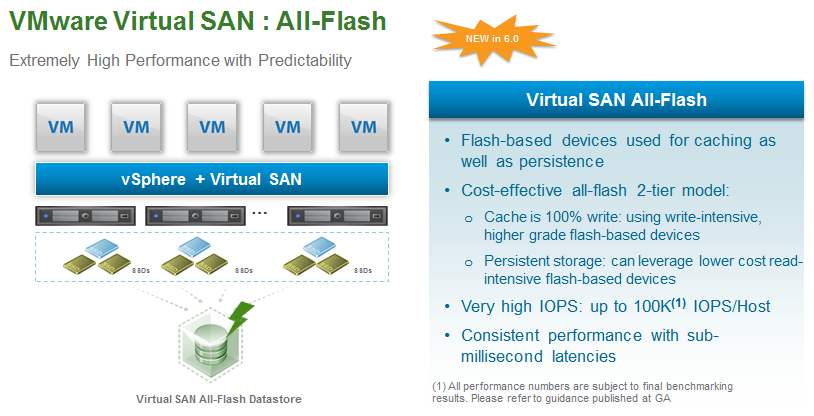

All-Flash VSAN

No more spinning disk requirement, VMware is now an All-Flash vendor as SSD’s can be used to store persistent data. In this mode SSDs are still used for caching if you want to use different SSD classes for storages tiers. All-Flash VSAN supports a new more cost-effective all-flash 2-tier model that uses write-intensive, higher grade flash-based devices (i.e. SLC) for 100% of all write buffering and lower cost read-intensive flash-based devices (i.e. MLC or TLC) for data persistent.

Faster and bigger VSAN clusters

In vSphere 6 the number of hosts per cluster has increased from 32 to 64 (2x), the number of IOPS per host has increased from 20K to 100K (5x), the number of VMs per host has increased from 100 to 200 (2x) and the number of VMs per cluster has increased from 3200 to 6000. In addition the maximum supported virtual disk size has increased from 2TB to 62TB.

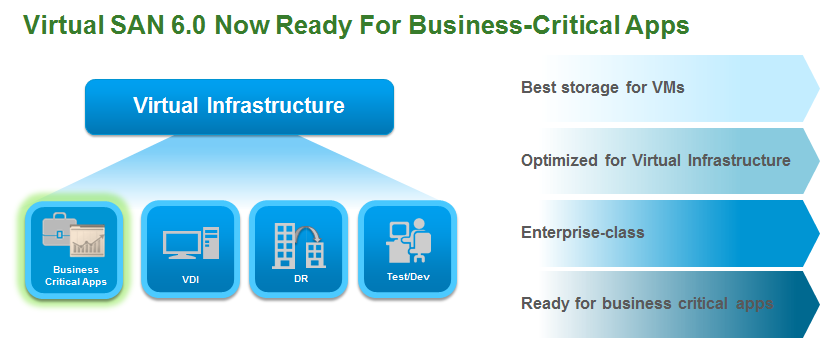

Enterprise performance & scale

In vSphere 5.5 VMware had outlined specific use cases for VSAN which included just about everything but Tier-1 apps. Now with the increase in IOPS from an All-Flash configuration and increased scaling up to 64 nodes VMware has now declared VSAN ready for Tier-1 enterprise apps.

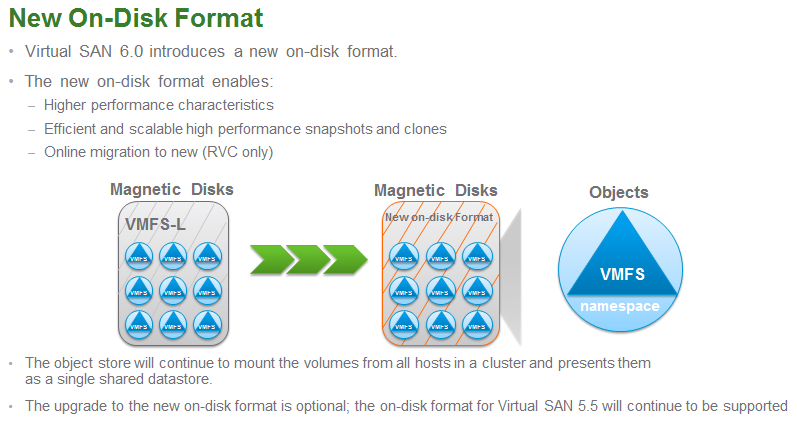

New file system

VSAN in vSphere 5.5 used a modified file-system based on VMFS with the locking mechanisms removed that was called VMFS-L. Now in vSphere 6 they are using a whole new file system called VSAN FS that is optimized for the VSAN architecture. The upgrade to the new file system is optional but you’ll want to do it so you don’t miss out on some of the new features and scalability that VSAN has. It was reported a while back that this would be disruptive which would make it a royal pain to do but VMware claims there is now an online migration from VMFS-L to VSAN FS.

Network improvements

On the network side VSAN now supports Layer 3 network configurations, apparently this was frequently requested. VSAN also now supports Jumbo Frames that may give a tiny boost in performance and also help reduce CPU overhead in larger deployments. VSAN also support both Standard & Distributed vSwitches but vDS is recommended so you can leverage Network I/O Control.

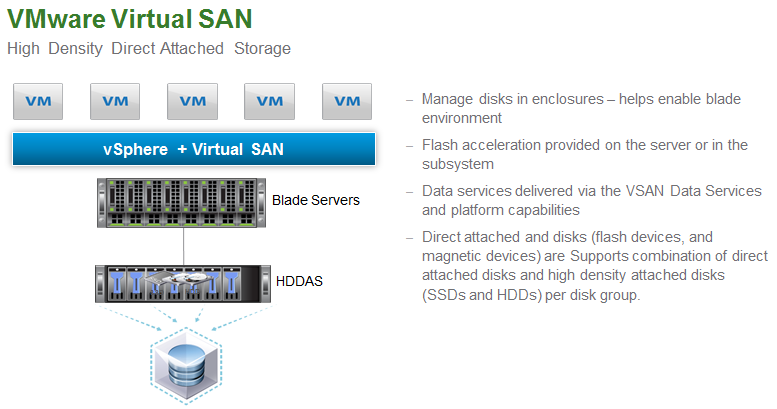

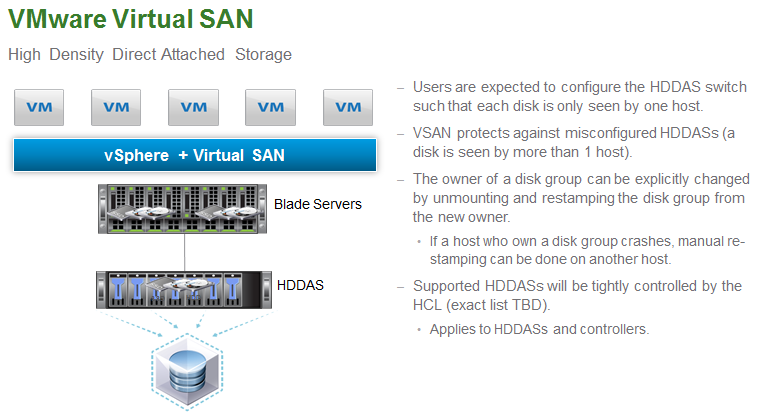

High Density Attached Storage

This new hardware support allows for denser VSAN nodes using external JBOD disk and also opens the door for using blade servers as hosts that were previously not good candidates for VSAN due to limited internal disk. The support for this will be tightly controlled by VMware’s HCL for VSAN.

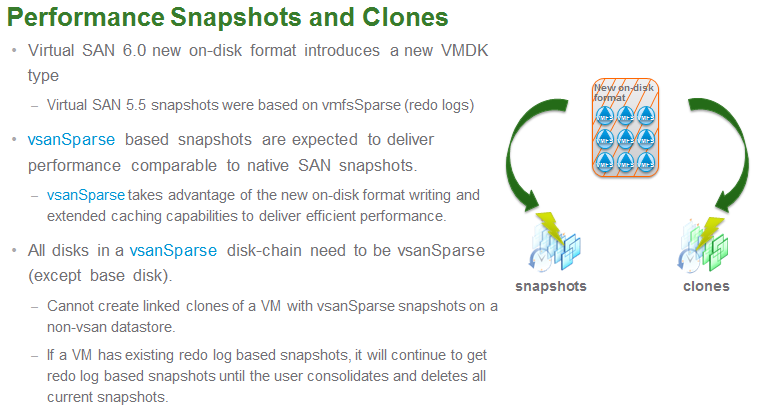

New vsanSparse VMDK type

This new VMDK type was created to address efficiency concerns with snapshots and clones that were previously based on the traditional redo VM snapshot. This new highly efficient VMDK type takes advantage of the new VSAN FS writing and extended caching capabilities to deliver much better performance. VMware claims that this put VSAN snapshots on par with native SAN snapshots.

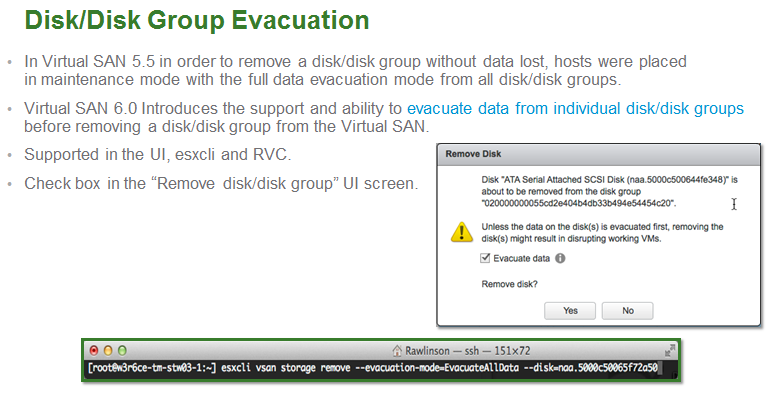

Improved Disk/Disk Group Evacuation

Replacing a disk in vSphere 5.5 was a pain as you had to put a host in maintenance mode prior to doing it. Now in vSphere 6 you have the ability to evacuate data from individual disks and disk groups to make the process much less disruptive.

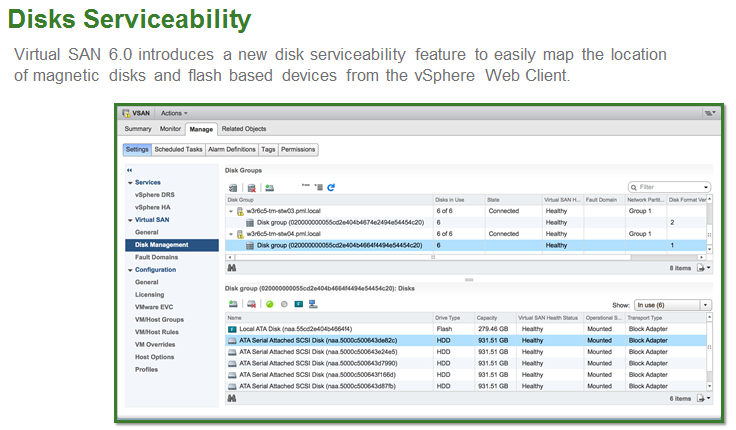

New Disk Serviceability

vSphere 6 makes it easier to map out physical disks in a VSAN node by introducing a new disk serviceability feature that will allow you to view individual disk from within the vSphere client. There is also more interaction with disk hardware as you can now turn disk lights on and off so you can make sure you are yanking the correct drive while replacing it. You can also now specifically tag disks as SSD and local disk that might otherwise not be recognized correctly.

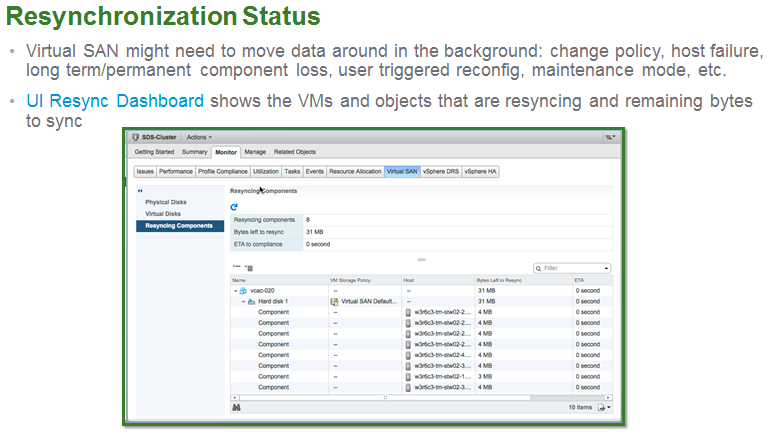

New Resynchronization Dashboard

A new Resynchronization Dashboard in the vSphere Client allows you to monitor the status of VMs and objects that might be resyncing due to policy changes, failures, etc. It provides you information on the bytes left to sync and the approximate time that it will finish.

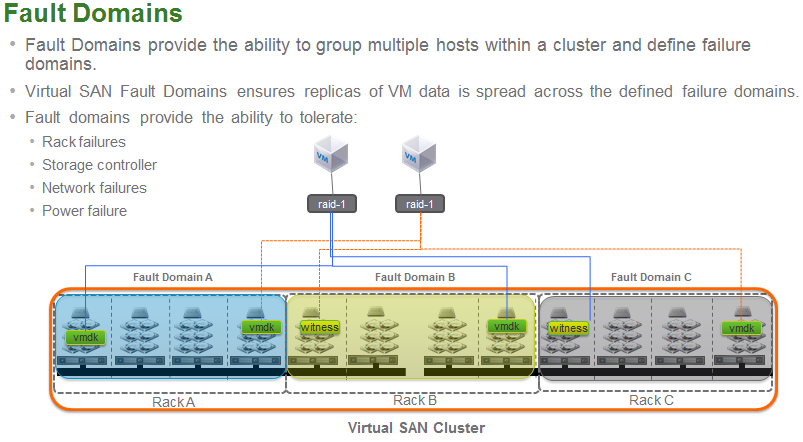

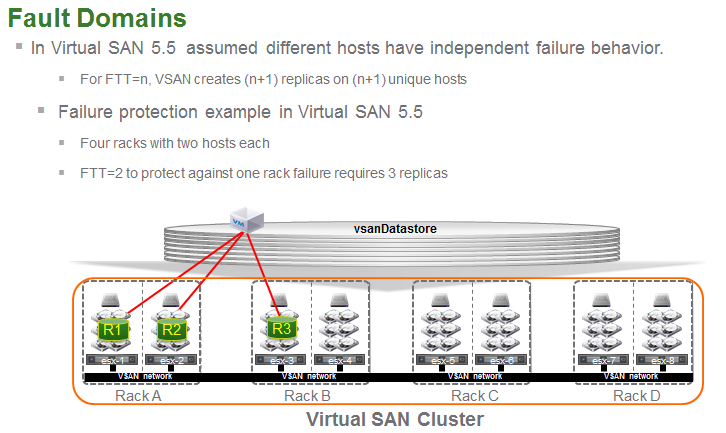

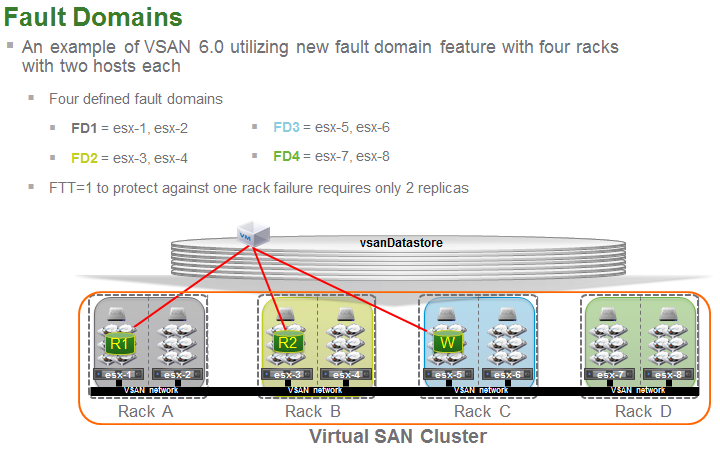

Better Fault Domains

You can now define Fault Domains to group multiples host within a cluster that ensure VM replicas are spread across defined Fault Domains. This new ability helps improve resiliency and helps protect against specific failure scenarios that might be highly disruptive to VSAN such as a rack, network or power failure.

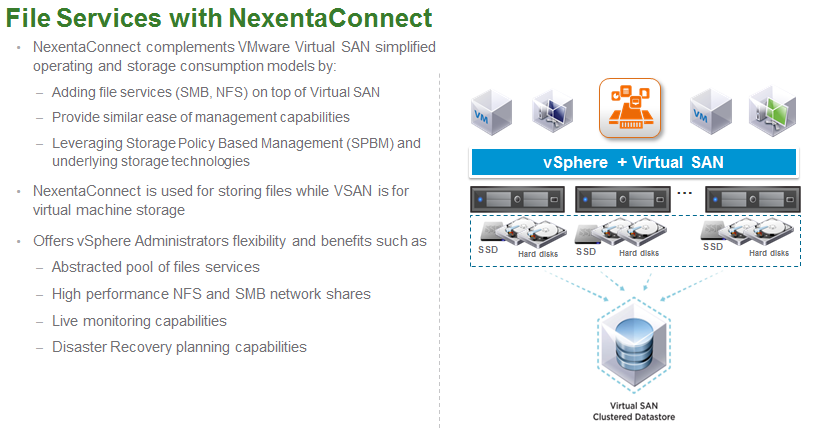

New 3rd Party File Services

This new ability allows 3rd parties to add additional capabilities and services on top of VSAN to provide value-added services. The one that is being featured with this is called File Services with NexentaConnect which essentially adds additional protocol support (SMB, NFS) to VSAN. This allows VSAN to be leveraged beyond the vSphere hosts in a cluster by any server in a data center that can use those protocols.

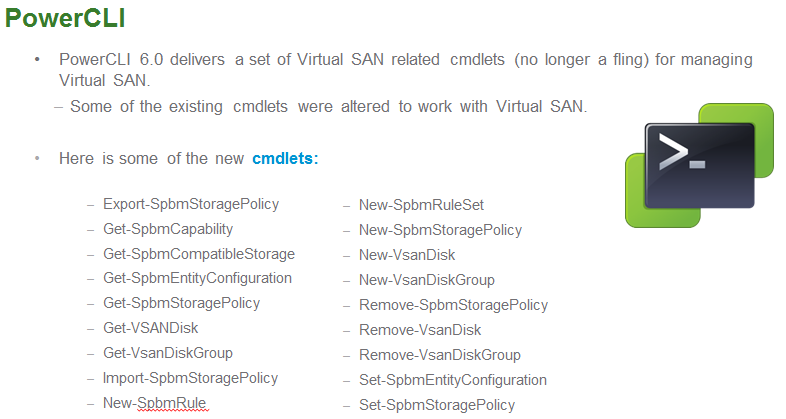

PowerCLI cmdlets

In vSphere 5.5 VMware developed unofficial support for VSAN using PowerCLI through one of their Flings. Now in vSphere 6 these are officially integrated and supported along with some new cmdlets.

VSAN Health Services

No VMware isn’t branching out into healthcare, VSAN Health Services provides in-depth health information on VSAN subsystems and their dependencies so you can better stay on top of the health of your VSAN environment and call a doctor when it needs it.

1 comment

Have you found any way to trigger using Powercli the various health tests for vSAN that are available from the web client? I would have thought that there would have been cdmlets included in Powercli 6 R3 vmware.vimautomation.storage module. But alas I came up short. any help is appreciated.